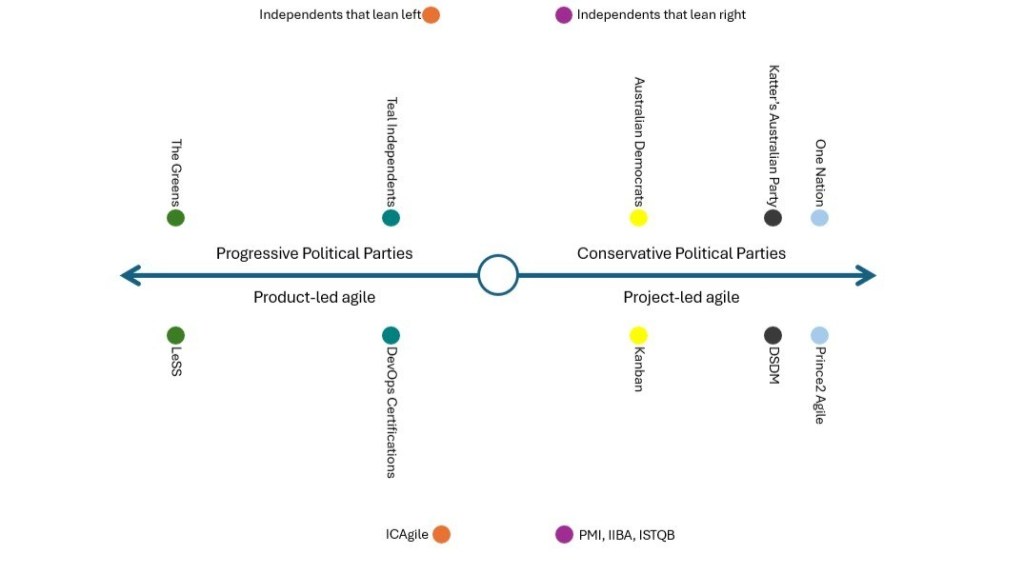

Please note: This is article 8 in a series that explores mapping agile certifications to what Daniel Luschwitz and I have coined the Agile Political Spectrum. The previous blogs in the series are available here:

- What if Agile Certifications were a Political Party?

- The Agile Political Landscape Series: PRINCE2 Agile and One Nation

- The Agile Political Landscape Series: LeSS and The Greens

- The Agile Political Landscape Series: DSDM and Katter’s Australian PartyDSDM and Katter’s Australian Party

- The Agile Political Landscape Series: DSDM and Katter’s Australian PartyDevOps and Teal Independents

- The Agile Political Landscape Series: Kanban and the Australian Democrats

- The Agile Political Landscape Series: ICAgile and the Left-Leaning Independents

A note on our political comparisons: These political comparisons are playful metaphors designed to illustrate philosophical positions on the agile spectrum. No certification body was harmed in the making of this analysis.

Every political spectrum has its right-leaning independents. Not party loyalists, not ideologues, but professionals who built their authority within an established discipline, created a constituency around it, and when the political winds shifted, didn’t abandon their ground. They absorbed the new language, translated it into terms their community already understood, and carried on.

In the agile certification world, that role belongs to PMI, IIBA and ISTQB.

The Project Management Institute (PMI), the International Institute of Business Analysis (IIBA), and the International Software Testing Qualifications Board (ISTQB) weren’t born from the agile movement. Each was built on the conviction that project management, business analysis, and software testing were distinct professional disciplines, each deserving its own body of knowledge, its own structured credential, and its own seat at the table. All three had developed substantial, globally recognised certification architectures on that premise long before agile became the dominant conversation in solutions delivery. And when that conversation became impossible to ignore, all three made the same move: they added agile to what they already offered, on their own terms.

PMI was the earliest and perhaps the most deliberate about it. The Project Management Institute had spent decades building the PMP into arguably the most recognised project management credential on the planet. When agile began reshaping how solutions were delivered, PMI had a problem: the profession it represented was being told, by increasingly loud voices, that its core assumptions were wrong. Rather than engage with that critique, PMI launched the PMI Agile Certified Practitioner, the PMI-ACP, in 2011. The message was clear: agile is a toolkit, and project managers can learn to use it. The PMI-ACP covers a broad range of agile and hybrid approaches, drawing on methodologies from across the spectrum to demonstrate that agile competency is an addition to the project manager’s repertoire, not a replacement for it. The role stayed intact. The credential adapted around it.

The absorption deepened from there. In 2019, PMI acquired Disciplined Agile from Scott Ambler and Mark Lines, and weeks later FLEX from Al Shalloway‘s Net Objectives; some of the more thoughtful independent voices in the agile community. Then came PMBOK 7 in 2021, the most complete move of all. The Guide abandoned the architecture that had defined the profession for decades – ten knowledge areas, forty-nine processes, the full procedural edifice – and restructured itself around twelve principles and eight performance domains, with a new vocabulary of value delivery, stewardship, and tailoring. Every principle could be reconciled with agile, lean, traditional, or hybrid ways of working. Presented as modernisation, it was also a reframing broad enough that almost no position within the delivery community sat outside it. The Guide no longer committed itself to a method; it committed itself to being the container within which methods live.

IIBA and ISTQB followed the same instinct. Because the question agile was really asking, whether specialist roles like the BA and the dedicated tester needed to exist in their traditional form within self-organising teams, was precisely the question neither body had any institutional interest in answering honestly. So they answered a different one. They asked how agile delivery changes the context these disciplines operate in, and built certifications around that. IIBA demonstrated this instinct directly: business analysis became “agile analysis”, the BABOK grew an agile extension, and a new certification emerged to recognise competency in delivering analysis within an agile context. ISTQB followed the same pattern. The vocabulary changed. The disciplines they described did not.

They weren’t alone in this approach. Across the professional credentialling landscape, a number of established bodies followed the same instinct, extending their frameworks to acknowledge agile without disturbing the structures those frameworks were built to protect. The pattern is consistent: take the new vocabulary, demonstrate how your discipline remains relevant within it, and issue a credential that bridges the two worlds. It’s not cynical. It’s what professional bodies do. They exist to conserve something, and they’re good at it.

So who are PMI, IIBA and ISTQB’s political counterparts?

As our discipline-first, reframe-rather-than-reform agile certifications, they map to the right-leaning independents: professionals who enter the political arena not to change the system but to make sure their constituency is protected within it. Independent of the major parties, pragmatic in their dealings, and deeply conservative in the one area that matters most: the continued relevance of the professional community they represent.

The parallels are direct. Right-leaning independents don’t arrive in parliament with a transformation agenda. They arrive with a specific brief: protect these jobs, represent this industry, make sure this community isn’t left behind by whatever the major parties decide to do next. PMI, IIBA and ISTQB carry exactly that brief. Their mandate isn’t to reimagine how solutions are built. It’s to ensure that project managers, business analysts, and testers retain a credentialled, respected place in whatever delivery model their organisations adopt. Agile is the context. It is not the cause.

This is also why the agile content within these certifications tends to feel like an additional module rather than a rewrite of the core. It is worth acknowledging that PMI made genuine strides here: the shift to a principles-based model in their later standards represented real philosophical movement, not just rebranding. But even with that evolution, the agile extensions across all three bodies still sit on top of established role structures, the knowledge domains, the professional boundaries, all remain structurally intact. Agile is introduced as a context the discipline now operates within, not a lens that reexamines whether the discipline, in its current form, is still the right tool. The project manager still manages. The BA still analyses. The tester still tests. The world changed around them, and the certification acknowledges that. The professional identity at the centre did not move.

Right-leaning independents are notably pragmatic about language. When the political winds shift, they update their messaging before they update their positions. All three bodies demonstrated this instinct. All three speak agile fluently, and they mean it, but the fluency is in service of protecting the ground they already hold. The translation is genuine. The priorities underneath it are unchanged.

For practitioners, that’s not necessarily a problem. A project manager holding the PMI-ACP brings something real to an agile context: breadth across multiple approaches, familiarity with hybrid environments, and a structured lens for managing complexity. An experienced business analyst who understands stakeholder facilitation, business value, and how to navigate complex organisational constraints brings real value to an agile team. A tester with genuine capability in risk, coverage, and quality thinking is an asset in any delivery environment. These certifications offer a structured bridge between deep existing expertise and the agile context it now operates within. That’s worth something, and it shouldn’t be dismissed.

For organisations, understand what you’re investing in: practitioners equipped to apply their discipline within agile delivery, not practitioners equipped to question whether that discipline, as currently structured, is what the team actually needs. In environments where project management, BA, and testing functions are well established and role clarity matters, these may be exactly the right credentials. In environments genuinely rethinking their operating model, the role boundaries these certifications reinforce may be part of what you’re trying to move beyond.

Right-leaning independents serve a real constituency and they serve it honestly. They’re not in parliament to lead a revolution. They’re there to make sure that when the revolution arrives, the people they represent still have a seat at the table. PMI, IIBA and ISTQB do exactly the same thing. They didn’t reshape agile. They made sure agile had room for the professionals who were already in the room. Whether that’s the credential you need depends entirely on whether your goal is to fit agile around your existing structure, or to let agile challenge it.

This article was originally published on LinkedIn by Daniel Luschwitz.

You must be logged in to post a comment.